The Ethics of AI in Content Creation: Where to Draw the Line Guide

The rise of Artificial Intelligence in content creation has ushered in an era of unprecedented efficiency and innovation. From generating blog posts and social media updates to drafting complex reports and creative narratives, AI tools are transforming how we produce information. Yet, with this incredible power comes a profound responsibility: the ethical considerations of using AI. This isn’t just about avoiding plagiarism or misinformation; it’s about preserving authenticity, ensuring transparency, and maintaining the integrity of human connection that content inherently seeks to foster. Understanding where to draw the line isn’t merely good practice; it’s essential for building trust, safeguarding your brand, and ensuring a sustainable, ethical future for content creation.

Navigating the Ethical Tightrope: When AI Becomes More Than Just a Tool

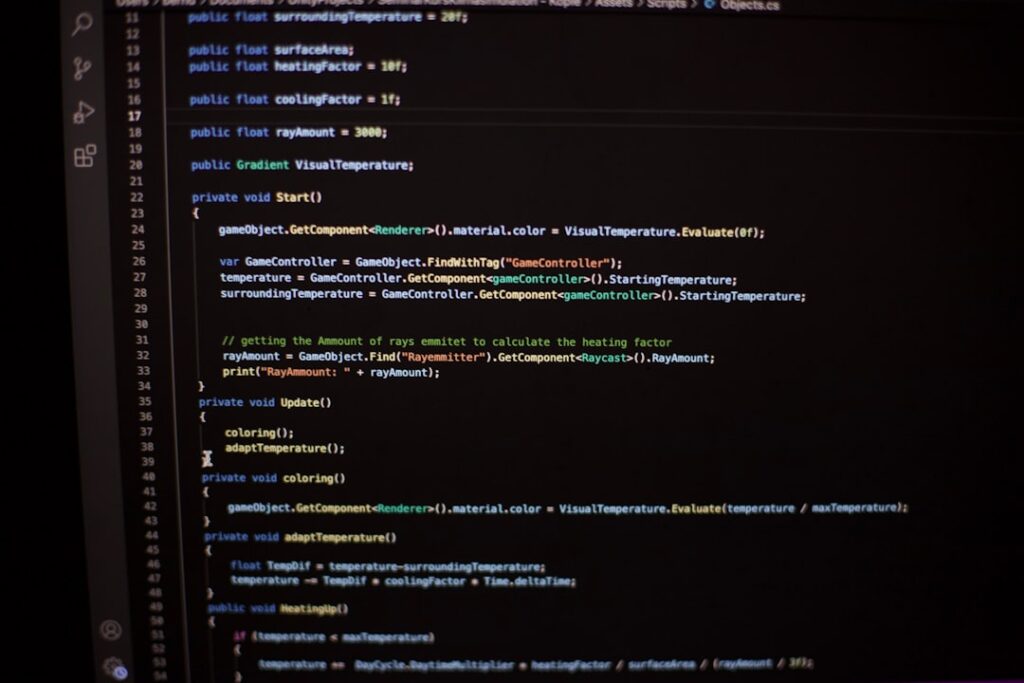

The core of the ethical dilemma lies in defining AI’s role: is it a sophisticated assistant, or is it an autonomous creator? When AI merely helps with research, grammar checks, or idea generation, its position as a tool is clear. The human creator remains firmly in control, guiding the narrative and injecting unique insights. However, as AI systems become more advanced, capable of generating entire articles, stories, or marketing copy with minimal human input, the line blurs. This shift demands a deeper look into the implications for originality, accountability, and the very definition of authorship.

Distinguishing AI Assistance from AI Authorship

- AI as a Facilitator: In this scenario, AI streamlines tasks like keyword research, content outlines, grammatical corrections, or translating existing content. The human writer is the primary author, making all strategic decisions and injecting personality, nuance, and critical thinking.

- AI as a Primary Generator: Here, AI produces the bulk of the content, often from a simple prompt. While human editing and fact-checking are still crucial, the generative aspect of AI becomes dominant. This raises questions about who truly “owns” the creative output and who is accountable for its accuracy and impact.

The ethical tightrope walk begins when we allow AI to take on roles traditionally reserved for human intellect and creativity. It’s vital to acknowledge that while AI can mimic human writing, it lacks genuine understanding, consciousness, or lived experience. This fundamental difference should always inform where we draw the line in its application.

The Disclosure Imperative: Setting Expectations for AI-Assisted Content

Transparency is the cornerstone of trust, especially when AI is involved. Readers, consumers, and audiences have a right to know if the content they are consuming was generated, in whole or in part, by artificial intelligence. Failing to disclose AI involvement can lead to feelings of deception, erode credibility, and ultimately damage your brand’s reputation. This isn’t just about legal compliance; it’s about ethical responsibility and respecting your audience.

Why and How to Be Transparent

- Building Trust: Openly stating AI’s role fosters honesty. When readers know what to expect, they can engage with content more authentically.

- Managing Expectations: AI-generated content, while impressive, might lack the unique voice, depth, or nuanced perspective of human-crafted pieces. Disclosure helps manage these expectations.

- Ethical Obligation: It respects the audience’s right to informed consumption. Just as you wouldn’t misrepresent the source of information, you shouldn’t misrepresent the creator.

Drawing the line here means establishing clear guidelines for when and how to disclose AI usage. This could range from a simple disclaimer at the beginning or end of an article (e.g., “This article was created with AI assistance and reviewed by a human editor”) to more granular details about which parts were AI-generated. The level of disclosure often depends on the extent of AI involvement and the nature of the content. For sensitive topics, or content requiring deep empathy and personal experience, explicit human authorship and review become even more critical.

Safeguarding Originality and Intellectual Property in an AI-Driven Landscape

One of the most complex ethical frontiers in AI content creation is the realm of originality and intellectual property (IP). AI models are trained on vast datasets of existing content, raising questions about potential plagiarism, copyright infringement, and the very concept of “originality” when algorithms are synthesizing ideas. Where do we draw the line to protect human creators and ensure fair use of existing works?

The Plagiarism and Copyright Conundrum

- Data Sourcing: AI models learn from existing data. If this data includes copyrighted material, is content generated by the AI based on that data infringing on copyright? This is a developing area of law, but the ethical stance must lean towards caution.

- Synthesized Originality: While AI can create novel combinations of ideas, it doesn’t “think” in the human sense. Its output is a sophisticated pastiche. Ensuring that this synthesis doesn’t inadvertently recreate or closely mimic existing, protected works is paramount.

To draw the line effectively, content creators must implement rigorous review processes. This includes using plagiarism checkers, manually verifying facts, and ensuring that AI outputs are significantly transformed by human input to create genuinely new works. For businesses, establishing clear internal policies on AI use, including guidelines for vetting AI-generated content for IP issues, is crucial. Moreover, staying informed about evolving legal interpretations regarding WIPO’s stance on AI and IP is essential.

Mitigating Bias and Ensuring Fairness in AI-Assisted Narratives

AI models learn from the data they are fed, and if that data contains biases—which much of our historical human-generated data does—the AI will perpetuate and even amplify those biases in its output. This can lead to content that is unfair, discriminatory, or misrepresentative, causing significant ethical concerns, especially when creating narratives that impact public perception or sensitive topics.

Identifying and Correcting AI Biases

- Reflecting Societal Biases: AI can inadvertently perpetuate stereotypes related to gender, race, culture, or socio-economic status if its training data is skewed.

- Harmful Outputs: Biased AI can generate content that excludes certain groups, promotes misinformation, or reinforces harmful narratives, damaging both individuals and society.

Drawing the line here means committing to active bias detection and mitigation. This involves:

- Diverse Data Sourcing: Advocating for and using AI models trained on more diverse and representative datasets.

- Human Oversight: Rigorously reviewing AI-generated content for any signs of bias, stereotyping, or unfair representation before publication. Human editors must be the ultimate arbiters of fairness and inclusivity.

- Ethical Guidelines: Developing internal ethical guidelines that explicitly address bias in AI-generated content and provide mechanisms for identifying and correcting it.

Content creators have a moral obligation to ensure their content is fair and equitable. Relying solely on AI without critical human review risks propagating harmful biases, undermining the credibility of the content, and potentially alienating vast segments of the audience.

Crafting Your Ethical Framework: Practical Lines to Draw for Responsible AI Use

Establishing clear boundaries for AI in content creation isn’t a one-size-fits-all solution. It requires a thoughtful, proactive approach tailored to your specific goals, audience, and brand values. This framework serves as your compass, guiding decisions on when, where, and how AI should be integrated into your content workflow without compromising integrity.

Key Pillars for Defining Your Ethical Boundary

- Purpose-Driven AI Use: Clearly define *why* you are using AI. Is it for efficiency, ideation, or analysis? Avoid using AI merely for the sake of it, especially for tasks that require deep human empathy or unique creative vision.

- Human-in-the-Loop Principle: Always ensure a human expert reviews, edits, and approves AI-generated content. This “human oversight” is critical for fact-checking, tone, nuance, and ensuring the content aligns with your brand’s voice and values. This principle is even emphasized in Google’s guidelines on AI-generated content.

- Transparency Standards: Develop a clear policy on how and when you will disclose AI involvement to your audience. This builds trust and sets realistic expectations.

- Accountability Matrix: Establish who is ultimately responsible for the content, regardless of AI involvement. The human editor/publisher always holds the final accountability for accuracy, ethics, and impact.

- Continuous Learning and Adaptation: